GOSTA: An Open Governance Specification for Autonomous AI Agents

AI agents are making decisions. They're recommending hires, allocating budgets, running content strategies, evaluating vendors, and prioritizing product roadmaps. The scope and autonomy of these decisions is growing every quarter.

Or rather — we want them to. We want agents making these decisions within defined authority, bounded autonomy, traceable reasoning, and a formal mechanism to stop what isn't working. In practice, most operate without any of that.

Most of the tooling in this space focuses on execution mechanics — how agents act, in what sequence, with what tools. Orchestration frameworks have made it straightforward to coordinate agent workflows. But execution is only part of the problem.

Who decides what the agent is allowed to do? Within what bounds? What happens when a strategy fails — not a technical error, but a strategic failure where the approach isn't working? Who kills it, based on what criteria, with what audit trail? How does the system prove its reasoning is sound?

GOSTA is an open specification that addresses the full lifecycle: from strategic intent through orchestration, coordination, and execution to governance. It is specification-level — it does not depend on any orchestration framework. Extensively tested with Claude (Cowork and Code). Expected to work with Codex. Other providers may require adaptation.

Why We Developed It

GOSTA was not built as a theoretical exercise. Two things drove it.

First, Cybersol is becoming an AI-native organization. We use AI agents across our own workflows — strategic planning, product development, content operations. The governance question was not theoretical. We needed formal structures for what those agents could decide, when they should escalate, and how we would audit their reasoning.

Second, our product (both production and capabilities) demanded it. Obligo, Cybersol's Cyber Liability Operating System — currently in development — governs what happens when a cybersecurity incident occurs.

GOSTA was born from both of these needs. The specification we are open-sourcing is the same architecture we rely on.

What GOSTA Is

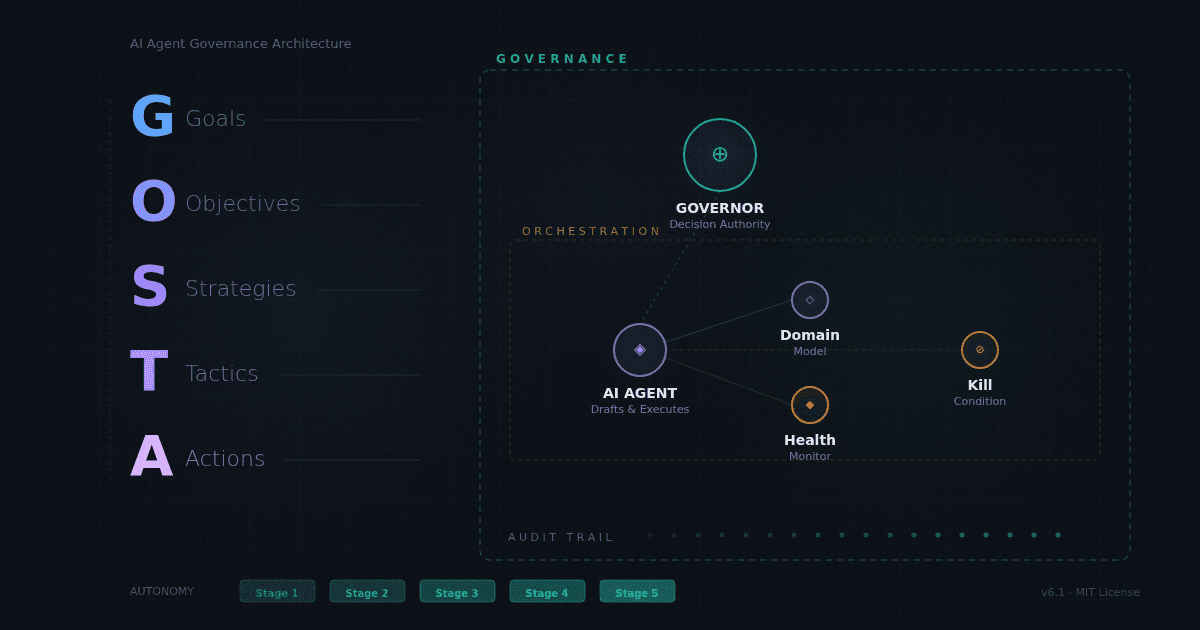

GOSTA stands for Goals, Objectives, Strategies, Tactics, Actions — a five-layer hierarchical control system for governing autonomous AI agents.

The architecture works like this. A human Governor — the person with decision authority over the scope being governed — approves an operating document that defines the strategic plan across all five layers. The AI system executes autonomously within those approved bounds. Health monitoring tracks whether each layer is performing. Formal kill conditions, defined at every strategy and tactic, determine when a bet is failing and should be stopped.

Consider a concrete example. A marketing team is using AI to execute a content strategy. The Governor (marketing director) approves an operating document: the goal is to increase qualified inbound leads by 30% in Q3. Objectives break this into measurable targets. Strategies define the approach — thought leadership content paired with SEO optimization. Tactics specify experiments: a blog series on industry trends, a gated whitepaper on a specific topic. Each tactic has a hypothesis and kill conditions — if the blog series doesn't generate 50 qualified visits per post within 4 weeks, it gets killed and the resources shift.

The AI drafts this plan. The Governor reviews, modifies, and approves it. The AI executes: generating content, scheduling, measuring engagement, computing health scores. When a tactic's kill conditions trigger, the AI surfaces the recommendation. The Governor decides. The cycle repeats.

This is not a dashboard or a project management tool. It is a reasoning system that acts within governed bounds — with every decision traceable to an approved operating document.

Graduated autonomy controls how much the AI can decide on its own. The specification defines five stages. At Stage 1, the AI proposes and the Governor approves everything. At Stage 3, the AI executes routine tasks autonomously within approved bounds. By Stage 5, the AI operates with full autonomy within approved strategies, escalating only governance-level choices. Nine categories of Governor authority — including goal changes, guardrail modification, and kill overrides — remain formally non-delegable at any stage.

Promotion between stages requires explicit criteria and Governor approval. Demotion happens automatically when trust conditions are violated — including automatic autonomy reduction when grounding health degrades. Autonomy is earned incrementally, not granted as a binary on/off.

Domain models ground the AI's reasoning in real expertise rather than general training data. A domain model is a structured knowledge file that defines the vocabulary, principles, and anti-patterns for a specific field — so the AI reasons within a formal framework rather than improvising from its training corpus.

The specification includes example domain models , but the framework is domain-agnostic. A Governor managing a greenhouse would create Agriculture and Plant Science models. One governing a research lab would build Research Methodology and Domain Science models.

Why Open Source

If AI agents are going to make or recommend decisions that affect organizations, customers, and communities, the governance layer that controls them should be auditable, forkable, and implementable by anyone. No single company should own the standard for how AI agent authority is defined and bounded.

This is the same logic that has driven open standards throughout technology's history. Protocols become more valuable — and more trustworthy — when they are publicly reviewable.

GOSTA is released under the MIT license. The entire specification lives in a single markdown file — no proprietary runtime, no vendor dependency, no infrastructure requirement. Use it, fork it, extend it, implement it in any stack. The governance model should be as inspectable as the decisions it governs.

What's Next

This is version 6.1 — a beta release. The specification is complete, Tier 0 is usable today, and Tier 1 implementation is next.

The roadmap is community-driven. Domain models for new fields — education, agriculture, scientific research, public policy — can be contributed by anyone with domain expertise. Coded implementations at Tier 1–3 are the natural next step for engineering teams who want to move beyond conversational execution.

GOSTA is a specification — it defines governance structures, not product features. The foundation has been tested internally across conditions designed to stress it. The community decides what it becomes — and version 7 should have the community's fingerprints on it.

Explore the specification: https://github.com/cybersoloss/GOSTA-OSS

Run your first governed session in 10 minutes: Walkthrough